OME-NGFF is a data format technology that addresses the demands of image processing workflows at scale and in the cloud.

Note: If you are not yet familiar with Next-Generation File Formats (NGFF) such as OME-NGFF, check out our previous blog post.

Improvements in sample handling, probe technologies, and spatial profiling modalities in the last 10 years have combined to make the recording of GByte- and TByte-sized datasets routine across the life sciences R&D sector. The value and challenges presented by these datasets are not solely in their size. The conversion of raw data to understandings, insights and hypotheses demands a connection between the raw data and derived measurements such as ROIs, masks, and calculated features. These elements must be calculated, stored and integrated for downstream analysis, visualization, sharing, and publication. While focus often lies on growth in data volumes, increases in data complexity and growing aspirations to derive as much value from these data as possible require a complete re-evaluation of the technologies used for storing, processing and understanding bioimaging data.

Glencoe Software, along with our colleagues at OME, have been leading an effort to optimize storage and access concepts for large, multi-dimensional bioimaging datasets used in high content screening (HCS), light-sheet fluorescence microscopy (LSFM), and digital pathology (sometimes referred to as whole slide imaging, or “WSI”). In all these cases, large images or datasets must be accessed and processed using high performance computing (HPC) or cloud resources. To enable efficient access, datasets must be processed in chunks, ideally with parallelization of that processing. These large datasets are rarely stored locally, with researchers often relying on institutional network storage or cloud offerings like object storage. The download of entire datasets from these locations to a local workstation is both impractical and expensive.

We refer to these new formats and data access patterns as OME-NGFF. An introduction to our work on the open specification, including examples of using OME-NGFF, is available in our recent blog post.

Here, we describe how both binary image data and derived analytical data can be represented in OME-NGFF for image processing workflows. We show how OME-NGFF meets the increasing requirements of multi-dimensional data complexity and enables experimental scientists, data analysts and systems engineers to work together on these challenges.

Scalability and usability

The bioimaging community has built a powerful library of image processing and analysis tools that rely on either real-time translation of proprietary file formats via Bio-Formats or conversion to plane-based formats like OME-TIFF. While powerful, these approaches aren’t well-suited for the highly-parallel, arbitrary access requirements of modern datasets and workflows. Converting data to a single, open format like OME-NGFF allows the construction of scalable analysis pipelines with uniform methods for data reading and writing, improving interoperability.

As read operations increase in number, any access latencies compound to produce major I/O limitations on workflow speed. Pre-chunking data in OME-NGFF lowers the latency of data access (see our recently reported benchmarking for details). For cloud deployments using object storage, this strategy can achieve accelerations in data access times of 1 to 2 orders of magnitude when compared to monolithic file formats. Chunking does, however, produce numerous files, making moves of this data expensive. Using OME-NGFF, therefore, motivates localizing compute resources close to data storage, rather than moving data between sites. This is consistent with elastic and ephemeral compute resources from various cloud providers.

Integration with open-source Deep Learning methods

Deep Learning (DL) methods for cell and nuclear segmentation such as StarDist and cellpose are growing in popularity among our customers, which is why we have integrated DL segmentation into OMERO Plus. Using OMERO.web and PathViewer, image segmentation can be initiated from the browser and run using compute resources of choice. Glencoe’s application of DL image segmentation reads image data directly from the OME-NGFF format, resolving the issues of file format diversity, allowing for high worker counts in parallel processing, and adding flexibility between using file or object storage. The outputs of our image processing workflows are also written back to OME-NGFF for visualization of hundreds of thousands to millions of objects in PathViewer (see more in Figure 1 and the section below).

Therefore, we know from experience that integrating OME-NGFF into image processing workflows offers real advantages for flexibility and scalability.

Common image processing outputs

Segmentation of objects (cells, nuclei, RNA puncta, etc.) in modern datasets produces hundreds of thousands to millions of objects. Further, object-level morphometric, spatial and intensity-based measurements can yield highly specialized cell and tissue classification algorithms. The results derived from raw binary data are large, heterogeneous and multi-dimensional, and they must be accessible and integrated with the raw data.

How are “objects” in an image represented?

The term “label image” describes an array that is typically the same size in its spatial dimensions as the original image, but the array (pixel) values refer to an identity or classification produced from a segmentation workflow. OME-NGFF allows label image data to be stored alongside original binary image data. Therefore, a single data access pattern can be shared between original and derived data, simplifying and unifying image processing input and output.

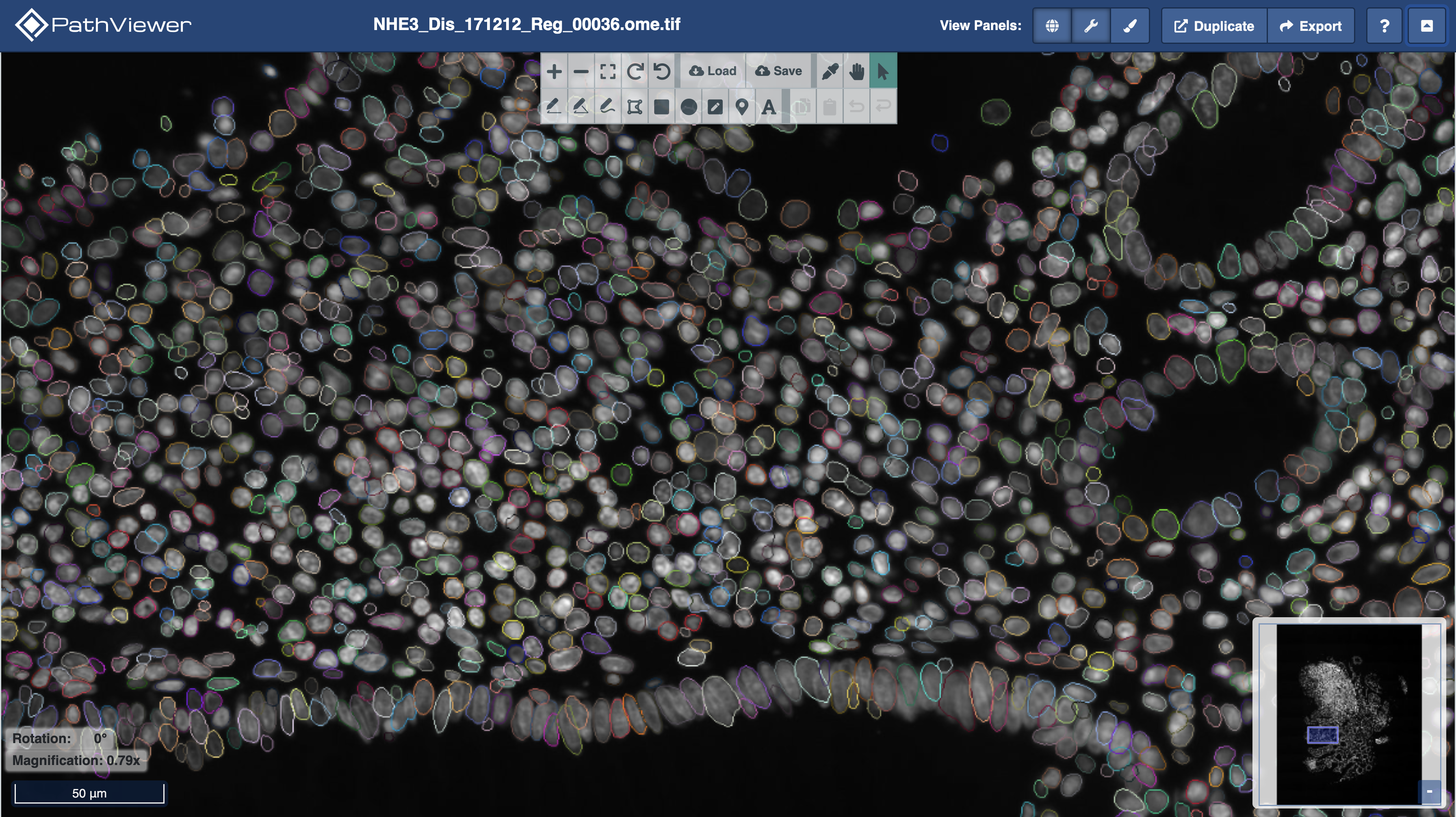

Figure 1. OMERO Plus and PathViewer support visualization of OME-NGFF images and label images. The multi-colored outlines are the output of StarDist nuclear segmentation with a pre-trained model. Colors are random. The label image and underlying image data (in greyscale) are in OME-NGFF.

Figure 1. OMERO Plus and PathViewer support visualization of OME-NGFF images and label images. The multi-colored outlines are the output of StarDist nuclear segmentation with a pre-trained model. Colors are random. The label image and underlying image data (in greyscale) are in OME-NGFF.

What about object-level measurements?

In addition to a label image representing segmented objects, each object is often characterized by a variety of morphometric, spatial or intensity-based measurements. From these measurements, objects can be classified into types. Visualizations can be enhanced by linking object overlays with data tables containing measurements matched to each object.

Object-level measurements are typically tabular data that require efficient querying across hundreds of columns and millions of rows. While OME-NGFF is a performant solution for large and multidimensional array data, it is not a one-size-fits-all solution for any data storage. Technologies such as columnar databases and geo-databases offer advantages for querying tabular segmentation results compared to CSV and other row-oriented data stores.

In OMERO Plus, object-level measurements are already supported by OMERO.tables, which uses PyTables and HDF5, and can be linked with images and label images to be visualized in PathViewer. Check out our recent demo at the OME Community Meeting here and Figure 2 below.

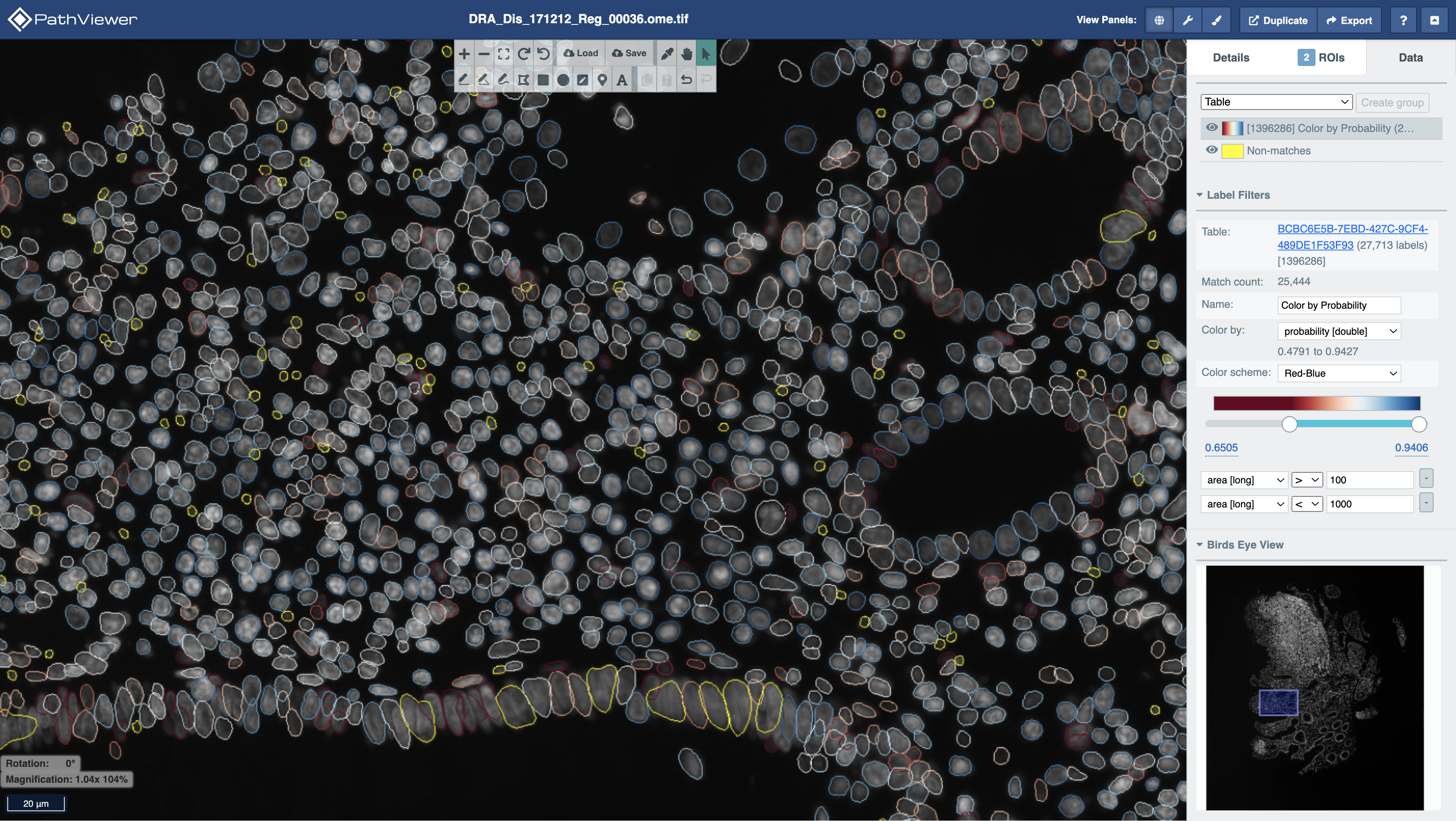

Figure 2. In PathViewer, label images can be “filtered” to show objects matching a particular query or “re-colored” based on the measurement values in a particular data table column. Here, StarDist segmented nuclei are filtered based on their area in pixels (non-matches in yellow) and colored by their probability (red to blue, low to high).

Figure 2. In PathViewer, label images can be “filtered” to show objects matching a particular query or “re-colored” based on the measurement values in a particular data table column. Here, StarDist segmented nuclei are filtered based on their area in pixels (non-matches in yellow) and colored by their probability (red to blue, low to high).

Summary

Together, OMERO Plus and PathViewer, using OME-NGFF and OMERO.tables, offer a data management, analysis and visualization suite for your array-based and tabular analytical outputs that considers the needs of data engineers, image analysts, and quantitative pathologists alike.